What Should Be Observe In Making And Use Of Scoring Rubrics 1d6p40

This document was ed by and they confirmed that they have the permission to share it. If you are author or own the copyright of this book, please report to us by using this report form. Report 2z6p3t

Overview 5o1f4z

& View What Should Be Observe In Making And Use Of Scoring Rubrics as PDF for free.

More details 6z3438

- Words: 8,875

- Pages: 23

What should be observed in making and use of scoring rubrics? Read more: What should be observed in making and use of scoring rubrics? - Where you involved in the making of the scoring rubrics how do you make one which is easier to construct analytic or holistic :: The two types of rubrics are Analytic and Holistic Rubrics. In analytic rubric, it resembles a grid with the criteria for student product and the levels of performance. The student product is listed in the leftmost column. The levels of performance are listed across the top row, using numbers or descriptive tags. The cells within the center of the rubric may contain descriptions of what the specified criteria looks like for each level of performance or it may be left blank. Scoring with analytic rubric is, each criterion is individually scored. While in a holistic rubric, it consists of a single scale. All the criteria are considered together to be included in the evaluation. Based on overall judgment of the student work, the rater assigns a single score. The rater matches the piece of student work to the single description on the scale. The advantages are: It saves time by minimizing the number of decisions the raters make, it can be applied by trained raters, to increase reliability and emphasizing on what the learner can demonstrate rather than what she/he can`t do. The disadvantages are: it doesn`t provide specific for improvement, the criteria can`t be weighed and it can be difficult to select the best description, when the student work is varying levels spanning the criteria points. Before implementing scoring rubrics, many teachers and assessors would grade students individually and the notes would be shared privately. This gave the teacher-student relationship something closer than what was in the classrooms. The notes the teachers gave to each student`s works were given out on each assignment. As a student, this was helpful on a per assignment basis. The notes and critiques were specific and gave a better assessment of what needed to be done for improvement. Overall, before the advent of scoring rubrics, students still had the opportunity for in depth evaluation but on a more personal basis. Rubrics are important basis for a performance tests,projects or output of of the students. A teacher may experience difficulty in adapting rubrics which do not measure the target learning outputs of the students. There will be mismatch between what you taught them and how do you measure their performance. It is best for teacher to review the criteria and scoring of rubrics to match the learning outputs versus the learning assessments.

They are definitely a good thing when implemented and used properly. Of course, if you have a terrible rubric then this is not useful. It`s usually best when someone directly involved in working with the students who knows what they can achieve and what they are learning has a hand in making the rubric.

Continuous Improvement Rubrics

What are rubrics? Rubrics, also commonly referred to as rating scales, are increasingly used to evaluate performance assessments. Much is written about rubrics these days, and a great deal of information is available on the Web. There is a growing number of ready-made rubrics for teachers to . Some sample rubrics for second language assessment are included in the Examples section, and we will increase this collection by gathering rubrics from teachers. Some of the many rubrics-related resources are listed in the Resources section. The origins of the word “rubric” date to the 14th Century: Middle English rubrike red ocher, heading in red letters of part of a book, from Anglo-French, from Latin rubrica, from rubr-, ruber red. Today a rubric is a guide listing specific criteria for grading or scoring academic papers, projects, or tests (Merriam Webster Dictionary). Brookhart, in her book How to Create and Use Rubrics (2013), defines a rubric as: a coherent set of criteria for students’ work that includes descriptions of levels of performance quality on the criteria. When using a rubric to assess student work, it is important to that you are trying to find the best description of the student work that you are assessing. Effective rubrics identify the most important qualities of the work, AND have clear descriptions of performance for each quality. Why use rubrics? When we consider how well a learner performed a speaking or writing task, we do not think of the performance as being right or wrong. Instead, we place the performance along a continuum from exceptional to not up to expectations. Rubrics help us to set anchor points along a quality continuum so that we can set reasonable and appropriate expectations for learners and consistently judge how well they have met them. Below are several reasons why rubrics are helpful in assessing student performance:

Evaluating student work by established criteria reduces bias.

Identifying the most salient criteria for evaluating a performance and writing descriptions of excellent performance can help teachers clarify goals and improve their teaching.

Rubrics help learners set goals and assume responsibility for their learning—they know what comprises an optimal performance and can strive to achieve it.

Rubrics used for self- and peer-assessment help learners develop their ability to judge quality in their own and others' work.

Learners receive specific about their areas of strength and weakness and about how to improve their performance.

Learners can use rubrics to assess their own effort and performance, and make adjustments to work before submitting it.

Rubrics allow learners, teachers, and other stakeholders to monitor progress over a period of instruction.

Time spent evaluating performance and providing can be reduced.

When students participate in deg rubrics, they are empowered to become self-directed learners.

Rubrics help teachers assess work based on consistent, agreed upon, and objective criteria.

Well-designed rubrics increase an assessment's construct and content validity by aligning evaluation criteria to standards, curriculum, instruction, and assessment tasks.

Well-designed rubrics increase an assessment's reliability by setting criteria that raters can apply consistently and objectively.

How do students become self-directed learners? How Learning Works: Seven Research-Based Principles for Smart Teaching

#7. To become self-sufficient learners, students must learn to monitor and adjust their approaches to learning. Principle: To become self-directed learners, students must learn to assess the demands of the task, evaluate their own knowledge and skills, plan their approach, monitor their progress, and adjust strategies as needed. p. 191 Compared to high school, students in college are often required to complete larger, longer-term projects and must do so rather independently. . . . One of the major intellectual challenges students face upon entering college is managing their own learning. Unfortunately, these metacognitive skills tend to fall outside the context area of most courses, and consequently they are often neglected in instruction. p. 191 [Research related to metacognition tell us] that learners need to engage in a variety of processes to monitor and control their learning. [Students need to be taught to] 1. Assess the task at hand, taking into consideration the task’s goals and constraints 2. Evaluate their own knowledge and skills, identifying strengths and weaknesses 3. Plan their approach in a way that s for the current situation 4. Apply various strategies to enact their plan, monitoring their progress along the way 5. Reflect on the degree to which their current approach is working so that they can adjust and restart they cycle as needed pp. 192-193 Assessing the Task Research suggests that the first phase of metacognition—assessing the task—is not always a natural or easy one for students. . . . Given that students can easily misassess the task at hand, it may not be sufficient simply to remind students to “read the assignment carefully.” In fact, students may need to (1)

learn how to assess the task, (2) practice incorporating this step into their planning before it will become habit, and (3) receive on the accuracy of their task assessment before they begin working on a given task. pp. 194-195 [What can be done to address this area?]

Be more explicit than you may think necessary. . . . It may be necessary not only to express . . . goals explicitly but also to articulate what students need to do to meet the assignment’s objectives. . . . [Also], explain to students why these particular goals are important. p. 204

Tell students what you do not want. . . . It can be helpful to identify what you do not mean by referring to common misinterpretations students have shown in the past or by explaining why some pieces of work do not meet you assignment goals. p. 204

Check students’ understanding of the task . . . . Ask them what they think they need to do to complete an assignment or how they plan to prepare for an exam. Then give them . . . . For complex assignments, ask student to rewrite the main goal of the assignment in their own words and then describe the steps that they feel they need to take in order to complete that goal. p. 205

Provide performance criteria with the assignment. . . . This can be done as a checklist that highlights the assignments’ key requirements. . . . Encourage students to refer to the checklist as they work on the assignment, and require them to submit a signed copy of it with the final product. . . . Your criteria could also be communicated to students through a performance rubric that explicitly represents the component parts of the task. . . . In addition to helping students “size up” a particular assignment, rubrics can help students develop other metacognitive habits. p. 205

Identifying Personal Strengths and Weaknesses Research has found the that people in general have a great difficulty recognizing their own strengths and weaknesses, and students appear to be especially poor judges of their own knowledge and skills. . . . [Additionally,] students with weaker knowledge and skills are less able to assess their abilities than students with stronger skills. p. 195 [To assist students with identifying their strengths and weaknesses . ..]

Give early, performance-based assessments. Provide students with ample practice and timely to help them develop a more accurate assessment of their strengths and weaknesses. Do this early in the enough in the semester so that they have time to learn from your and adjust as necessary. p. 206

Provide opportunities for self-assessment. . . . Give students practice exams (or other assignments) that replicate the kinds of questions they will see on real exams and them proved answer keys so that students can check their own work. p. 206

Planning Students tend to spend too little time planning, especially when compared to more expert individuals. This lack of planning [leads] novices to waste much of their time because they made false starts and took steps that ultimately did not lead to solutions. . . . Even though planning one’s approach to a task can increase the chances of success, students tend not to recognize the need for it. . . . When students do engage in planning, they often make plans that are not well matched to the task. p. 197 [To teach students how to plan appropriate approaches . . . ]

Have students implement a plan that you provide. . . . [For example], provide students with a set of interim deadlines or a time line for deliverables that reflect the way you would plan the stages of work. . . . Although requiring students to follow a plan that you provide does not give them practice developing their own plan, it does help them think about the component parts of a complex task, as well as their logical sequencing. p. 207

Have students develop their own plan. . . . When students’ planning skills have developed, . . . require them to submit a plan as the first “deliverable” in larger assignments. This could be in the form of a project proposal, an annotated bibliography, or a time line that identifies the key stages of work. Provide on their plan. p. 207

Make planning the central goal of the assignment. . . . Assign some tasks that are solely on planning. For example, instead of solving or completing a task, students could be asked to plan a solution strategy for a set of problems that involves describing how they would solve each problem. . . . Follow-up assignments can require students to implement their plans and reflect on their strengths and deficiencies. p. 208

Applying the Plan and Monitoring Progress Once students have a plan and begin to apply [it], they need to monitor their performance [by asking themselves], “Is this strategy working, or would another one be more productive?” . . . . Students who naturally monitor their own progress and try to explain to themselves what they are learning along the way generally show greater learning gains as compared to students who engage less often in selfmonitoring and self-explanation activities. . . . Students who [are] taught or prompted to monitor their own understanding or to explain to themselves what they [are] learning [make] greater learning gains relative to students what [are not taught to self-monitor]. [Moreover], research has shown that when students are taught to ask each other a series of comprehension-monitoring questions during reading, they learn to self-monitor more often and hence learn more from what they read. pp. 197-199 [How can you help students learn to self-monitor?]

Provide simple heuristics for self-correction. Teach students basic heuristics for quickly assessing their own work and identifying errors. For example, encourage students to ask themselves, “Is this a reasonable answer, given the problem?”. . . . There are often disciplinary heuristics that students should also learn to apply. p. 208

Have students do guided self-assessments. Require students to assess their own work against a set of criteria that you provide. . . . However, students may not be able to accurately assess their own work without first seeing this skill demonstrated or getting some explicit instruction and practice. p. 209

Require students to reflect on and annotate their own work. . . . Require as a component of the assignment that students explain what they did and why, describe how they responded to various challenges, and so on. . . . Requiring reflection or annotation helps students become more conscious of their own thought processes and work strategies and can lead them to make more appropriate adjustments. p. 209

Use peer review/reader response. Have students analyze their classmates’ work and provide . Reviewing one another’s work can help students evaluate and monitor their own work more effectively and then revise it accordingly. However, peer review is generally only effective when you give student reviewers specific criteria about what to look for and comment on. pp. 209-210

Reflecting and Modifying Approach Even when students monitor their performance . . . there is no guarantee that they will adjust or try more effective alternatives [because they may be resistant to changing their methods or] may lack alternative strategies. . . . Good problem solvers will try new strategies if their current strategy is not working, whereas poor problem solvers will continue to use a strategy even after it has failed. . . . [Using alternative strategies may not occur] if the perceived cost of switching to a new approach is too high. Such costs include the time and effort it takes to change one’s habits as well as the fact that new approaches, even if better in the long run, tend to under-perform more practiced approaches at first. . . . Research shows that people will often continue to use familiar strategies that work moderately well rather than switch to a new strategy that would work better. pp. 199-200 [If it is so difficult to for students to change, how can you get then to reflect on and modify their approaches?]

Provide activities that require student to reflect on their performances. . . . For example, [you could ask students to] answer questions such as: What did you learn from doing this project? What skills would do you need to work on? How would you prepare differently or approach the final assignment based on across the semester? How have your skills evolved across the last three assignments? p. 210

Prompt students to analyze the effectiveness of their study skills. When students learn to reflect on the effectiveness of their own approach, they are able to identify problems and make necessary adjustments. A specific example of a self-reflective activity is an “exam wrapper.” . . . An exam wrapper might ask student (1) what they of errors they made . . . , (2) how they studied . . . , and (3) what they will do differently in preparation for the next exam). pp. 210-211

Present multiple strategies. Show students multiple ways that a task or problem can be conceptualized, represented, or solved. . . . Students be asked to solve problems in multiple

ways and then discuss the advantages and disadvantages to the different methods. Exposing students to different approaches and analyzing their merits can highlight the value of critical exploration. p. 211

Create assignments that focus on strategizing rather than implementation. Have students propose a range of potential strategies and predict their advantages and disadvantages rather than actually choosing and carrying one through. . . . By putting the emphasis of the assignment on thinking the problem through, rather than solving it, students get practice evaluating strategies in relation to their appropriate or fruitful application. pp. 211-212

Researchers found a pattern that linked students’ beliefs about intelligence with their study strategies and learning beliefs. . . . Students who believe intelligence is fixed have no reason to put in time and effort to improve because they believe their efforts will have little or no effect. . . . Students who believe that intelligence is incremental (that is, skills can be developed that will lead to greater academic success) have a good reason to engage their time and effort in various study strategies because they believe this will improve their skills and hence their outcomes. . . . Beliefs about one’s own abilities can also have an impact on metacognitive processes and learning. pp. 200-201 Implications: Students tend not to apply metacognitive skills as well or as often as they should. This implies that students will often need our in learning, refining, and effectively applying basic metacognitive skills. To address these needs, them, requires us as instructors to consider the advantages these skills can offer students in the long run and then, as appropriate, to make the development of metacognitive skills part of our course goals. . . . The most natural implication may be to address these issues as directly as possible—by working to raise students’ awareness of the challenges they face and by considering some of the interventions that helped students productively modify their beliefs about intelligence—and at the same time, to set reasonable expectations for how much improvement is likely to occur. pp. 202-203 [To help students overcome their inaccurate understanding of intelligence and learning . . . ]

Address students’ beliefs about learning directly [by] discussing the nature of learning and intelligence . . . to disabuse them of unproductive beliefs (for example, “I can’t draw” or “I can’t do math) and to highlight the positive effects of practice, effort, and adaptation. p. 212

Broaden students’ understanding of learning [by helping them understand that] you can know something at one level (recognize it) and still not know it (know how to use it). Consider introducing students to those various forms of knowledge so that they can more accurately assess the task, . . . assess their own strengths and weaknesses, . . . and identify gaps in their education. pp. 212-213

Help students set realistic expectations [by recalling] your own frustrations as a student and [describing] how you (or a famous figure in your field) overcame various obstacles. . . . [This can] help students avoid unproductive and often inaccurate attributions about themselves. p. 213

[In addition, you can also help students better understand the metacognition by modeling your own metacognitive processes and helping students learn to scaffold their own metacognitive processes.] Scaffolding refers to the process by which instructors provide students with cognitive s early in their learning, and then gradually remove them as students develop greater mastery and sophistication. . . . Instructors can give students practice working on discrete phases of the metacognitive process in isolation before asking students to integrate time. . . . Breaking down the process highlights the importance of particular stages. . . . A second form of scaffolding involves a progression from tasks with considerable instructor provided structure to tasks that require greater or even complete student autonomy. p. 215

Scoring rubrics help the teacher to show the students what the goals are and let the students understand why they were given such grade or score. The students will be able to grasp an understanding of their performance in contrast with the prescribed criteria. It will be easier for the teacher to maintain a standard that students should adhere to.

What is Scoring Rubrics? -it means a standard level of performance/criteria of Students. It is use to score/grade written exams,presentation etc.. Rubics have set of scoring and values for criteria that are group in categories. For exams, rubric provides an objective against the student performance. It also use to delineate the criteria`s consistent for grading. It will provide students a basis for their self-reflection and peer review. Rubric can be use to in measuring a stated objective and rate performance. Rubrics are categorized to treat the criteria one at a time and to used with a similar specific task that are applicable to exams. Benefits of Scoring Rubrics The main benefit of rubric is to test the performance of students. Using rubrics, you can observe the result of student`s work. This will let the students to understand the goals that they have to achieve. It provides a way to communicate and developed our goals to the students.

How Do Rubrics Help? How students and teachers understand the standards against which work will be measured. Rubrics are multidimensional sets of scoring guidelines that can be used to provide consistency in evaluating student work. They spell out scoring criteria so that multiple teachers, using the same rubric for a student's essay, for example, would arrive at the same score or grade.

Rubrics are used from the initiation to the completion of a student project. They provide a measurement system for specific tasks and are tailored to each project, so as the projects become more complex, so do the rubrics. Rubrics are great for students: they let students know what is expected of them, and demystify grades by clearly stating, in age-appropriate vocabulary, the expectations for a project. They also help students see that learning is about gaining specific skills (both in academic subjects and in problem-solving and life skills), and they give students the opportunity to do self-assessment to reflect on the learning process.

Teacher Eeva Reeder says using scoring rubrics "demystifies grades and helps students see that the whole object of schoolwork is attainment and refinement of problem-solving and life skills." Rubrics also help teachers authentically monitor a student's learning process and develop and revise a lesson plan. They provide a way for a student and a teacher to measure the quality of a body of work. When a student's assessment of his or her work and a teacher's assessment don't agree, they can schedule a conference to let the student explain his or her understanding of the content and justify the method of presentation. There are two common types of rubrics: team and project rubrics. Team Rubrics A team rubric is a guideline that lets each team member know what is expected of him or her. For example, a team rubric contains detailed descriptions for tasks that will be done while the students are working as a team, and states acceptable degrees of behavior. It also defines the consequences for a team member who is not participating, and lists actions or tasks required of each team member for the completion of a successful project, such as the following:

Did the person participate in the planning process?

How involved was each member?

Was the team member's work to the best of his or her ability?

Shows the quantitative value of the behaviors or actions.

"For as long as assessment is viewed as something we do 'after' teaching and learning are over, we will fail to greatly improve student performance, regardless of how well or how poorly students are currently taught or motivated."-GRANT WIGGINS, EDD., PRESIDENT AND DIRECTOR OF PROGRAMS, RELEARNING BY DESIGN, EWING, NEW JERSEY Project Rubrics A project rubric lists the requirements for the completion of a project-based-learning lesson. It is usually some sort of presentation: a word-processed document, a poster, a model, a multimedia presentation, or a combination of presentations. The teacher can create a project rubric, or students can collaborate, helping set goals for the project and suggest how their work should be evaluated. Together, the teacher and the students can answer the following questions:

What is the quality of the work?

How do you know the content is accurate?

How well was the presentation delivered?

How well was the presentation designed?

What was the main idea?

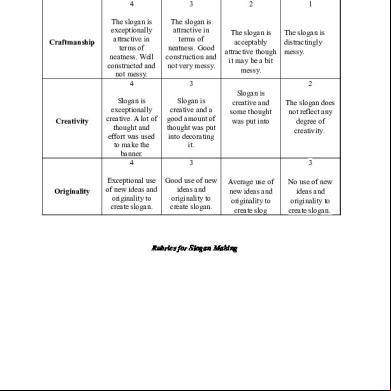

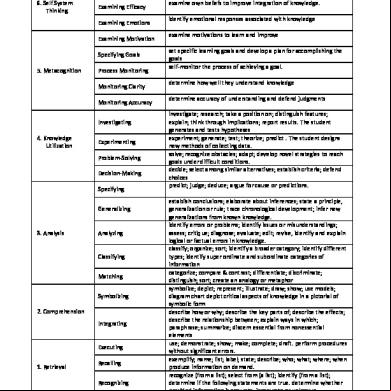

Sample Rubrics Look at these rubrics from several websites, which show team rubrics and project rubrics for various subjects and grade levels.

Collaboration Rubric for Group Work from a high school science project, San Diego City Schools

Oral Presentation Rubric from a middle school humanities project, Louisiana Voices

Written Report Rubric from SCORE

Math Problem-Solving Rubric from Utah Education Network

Discussion Participation Rubric ( of the Future

PDF ) from a ninth grade humanities project, School

After you've reviewed the sample rubrics, discuss the following:

What do you think of the different styles?

Do they meet your expectations of rubrics for the designated grade levels? Why, or why not?

Which one most closely suits your vision of what you will need? Why?

A recent blog on Edutopia.org by Andrew Miller, "Tame the Beast: Tips for Deg and Using Rubrics," has some great advice for how to work with rubrics.

Rubrics as a Tool for Learning and Assessment: What Do Baccalaureate Students Think? Abstract Rubrics are increasingly used as tools to evaluate student work. This study examined BSW students' perceptions of the benefits and challenges of using rubrics. Pre- and posttest questionnaires were istered to 35 students in two sections of a diversity course. Students judged the use of rubrics favorably. Rubrics communicated the instructors' expectations, clarified how to write course assignments, explained grade and point deductions and, in general, made course expectations clearer. Students suggested that the rubric design should be self-explanatory and easy to follow. If carefully developed, rubrics may be a useful tool for advancing student learning in social work programs.

Rubrics: Tools for Making Learning Goals and Evaluation Criteria Explicit for Both Teachers and Learners Deborah Allen

*

and Kimberly Tanner†

Author information ► Copyright and License information ► This article has been cited by other articles in PMC. Go to: INTRODUCTION Introduction of new teaching strategies often expands the expectations for student learning, creating a parallel need to redefine how we collect the evidence that assures both us and our students that these expectations are in fact being met. The default assessment strategy of the typical large, introductory, college-level science course, the multiple- choice (fixed response) exam, when used to best advantage can provide about what students know and recall about key concepts. Leaving aside the difficulty inherent in deg a multiple-choice exam that captures deeper understandings of course material, its limitations become particularly notable when learning objectives include what students are able to do as well as know as the result of time spent in a course. If we want students to build their skill at conducting guided laboratory investigations, developing reasoned arguments, or communicating their ideas, other means of assessment such as papers, demonstrations (the “practical exam”), other demonstrations of problem solving, model building, debates, or oral presentations, to name a few, must be enlisted to serve as benchmarks of progress and/or in the assignment of grades. What happens, however, when students are novices at responding to these performance prompts when they are used in the context of science learning, and faculty are novices at communicating to students what their expectations for a high-level performance are? The more familiar terrain of the multiple-choice exam can lull both students and instructors into a false sense of security about the clarity and objectivity of the evaluation criteria (Wiggins, 1989 ) and make these other types of assessment strategies seem subjective and unreliable (and sometimes downright unfair) by comparison. In a worst-case scenario, the use of alternatives to the conventional exam to assess student learning can lead students to feel that there is an implicit or hidden curriculum—the private curriculum that seems to exist only in the mind's eye of a course instructor. Use of rubrics provides one way to address these issues. Rubrics not only can be designed to formulate standards for levels of accomplishment and used to guide and improve performance but also they can be used to make these standards clear and explicit to students. Although the use of rubrics has become common practice in the K–12 setting (Luft, 1999 ), the good news for those instructors who find the idea attractive is that more and more examples of the use of rubrics are being noted at the college and university level, with a variety of applications (Ebert-May, undated; Ebert-May et al., 1997 ; Wright and Boggs, 2002 ; Moni et al., 2005 ; Porter, 2005 ; Lynd-Balta, 2006 ). Go to:

WHAT IS A RUBRIC? Although definitions for the word “rubric” abound, for the purposes of this feature article we use the word to denote a type of matrix that provides scaled levels of achievement or understanding for a set of criteria or dimensions of quality for a given type of performance, for example, a paper, an oral presentation, or use of teamwork skills. In this type of rubric, the scaled levels of achievement (gradations of quality) are indexed to a desired or appropriate standard (e.g., to the performance of an expert or to the highest level of accomplishment evidenced by a particular cohort of students). The descriptions of the possible levels of attainment for each of the criteria or dimensions of performance are described fully enough to make them useful for judgment of, or reflection on, progress toward valued objectives (Huba and Freed, 2000 ). A good way to think about what distinguishes a rubric from an explanation of an assignment is to compare it with a more common practice. When communicating to students our expectations for writing a lab report, for example, we often start with a list of the qualities of an excellent report to guide their efforts toward successful completion; we may have drawn on our knowledge of how scientists report their findings in peer-reviewed journals to develop the list. This checklist of criteria is easily turned into a scoring sheet (to return with the evaluated assignment) by the addition of checkboxes for indicating either a “yes-no” decision about whether each criterion has been met or the extent to which it has been met. Such a checklist in fact has a number of fundamental features in common with a rubric (Bresciani et al., 2004 ), and it is a good starting point for beginning to construct a rubric. Figure 1 gives an example of such a scoring checklist that could be used to judge a high school student poster competition.

Figure 1. An example of a scoring checklist that could be used to judge a high school student poster competition. However, what is referred to as a “full rubric” is distinguished from the scoring checklist by its more extensive definition and description of the criteria or dimensions of quality that characterize each level of accomplishment. Table 1 provides one example of a full rubric (of the analytical type, as defined in the paragraph below) that was developed from the checklist in Figure 1. This example uses the typical grid format in which the performance criteria or dimensions of quality are listed in the rows, and the successive cells across the three columns describe a specific level of performance for each criterion. The full rubric in Table 1, in contrast to the checklist that only indicates whether a criterion exists (Figure 1), makes it far clearer to a student presenter what the instructor is looking for when evaluating student work.

Table 1. A full analytical rubric for assessing student poster presentations that was developed from the scoring checklist (simple rubric) from Figure 1 Go to: DEG A RUBRIC A more challenging aspect of using a rubric can be finding a rubric to use that provides a close enough match to a particular assignment with a specific set of content and process objectives. This challenge is particularly true of so-called analytical rubrics. Analytical rubrics use discrete criteria to set forth more than one measure of the levels of an accomplishment for a particular task, as distinguished from holistic rubrics, which provide more general, uncategorized (“lumped together”) descriptions of overall dimensions of quality for different levels of mastery. Many s of analytical rubrics often resort to developing their own rubric to have the best match between an assignment and its objectives for a particular course. As an example, examine the two rubrics presented in Tables 2 and and3,3, in which Table 2 shows a holistic rubric and Table 3 shows an analytical rubric. These two versions of a rubric were developed to evaluate student essay responses to a particular assessment prompt. In this case the prompt is a challenge in which students are to respond to the statement, “Plants get their food from the soil. What about this statement do you agree with? What about this statement do you disagree with? your position with as much detail as possible.” This assessment prompt can serve as both a preassessment, to establish what ideas students bring to the teaching unit, and as a postassessment in conjunction with the study of photosynthesis. As such, the rubric is designed to evaluate student understanding of the process of photosynthesis, the role of soil in plant growth, and the nature of food for plants. The maximum score using either the holistic or the analytical rubric would be 10, with 2 points possible for each of five criteria. The holistic rubric outlines five criteria by which student responses are evaluated, puts a 3-point scale on each of these criteria, and holistically describes what a 0-, 1-, or 2-point answer would contain. However, this holistic rubric stops short of defining in detail the specific concepts that would qualify an answer for 0, 1, or 2 points on each criteria scale. The analytical rubric shown in Table 3 does define these concepts for each criteria, and it is in fact a fuller development of the holistic rubric shown in Table 2. As mentioned, the development of an analytical rubric is challenging in that it pushes the instructor to define specifically the language and depth of knowledge that students need to demonstrate competency, and it is an attempt to make discrete what is fundamentally a fuzzy,

continuous distribution of ways an individual could construct a response. As such, informal analysis of student responses can often play a large role in shaping and revising an analytical rubric, because student answers may hold conceptions and misconceptions that have not been anticipated by the instructor.

Table 2. Holistic rubric for responses to the challenge statement: ′Plants get their food from the soil′

Table 3. An analytical rubric for responses to the challenge statement, “Plants get their food from the soil” The various approaches to constructing rubrics in a sense also can be characterized to be holistic or analytical. Those who offer recommendations about how to build rubrics often approach the task from the perspective of describing the essential features of rubrics (Huba and Freed, 2000 ; Arter and McTighe, 2001 ), or by outlining a discrete series of steps to follow one by one (Moskal, 2000 ; Mettler, 2002 ; Bresciani et al., 2004 ; MacKenzie, 2004 ). Regardless of the recommended approach, there is general agreement that a rubric designer must approach the task with a clear idea of the desired student learning outcomes (Luft, 1999 ) and, perhaps more importantly, with a clear picture of what meeting each outcome “looks like” (Luft, 1999 ; Bresciani et al., 2004 ). If this picture remains fuzzy, perhaps the outcome is not observable or measurable and thus not “rubric-worthy.” Reflection on one's particular answer to two critical questions—“What do I want students to know and be able to do?” and “How will I know when they know it and can do it well?”—is not only essential to beginning construction of a rubric but also can help confirm the choice of a particular assessment task as being the best way to collect evidence about how the outcomes have been met. A first step in deg a rubric, the development of a list of qualities that the learner should demonstrate proficiency in by

completing an assessment task, naturally flows from this prior rumination on outcomes and on ways of collecting evidence that students have met the outcome goal. A good way to get started with compiling this list is to view existing rubrics for a similar task, even if this rubric was designed for younger or older learners or for different subject areas. For example, if one sets out to develop a rubric for a class presentation, it is helpful to review the criteria used in a rubric for oral communication in a graduate program (organization, style, use of communication aids, depth and accuracy of content, use of language, personal appearance, responsiveness to audience; Huba and Freed, 2000 ) to stimulate reflection on and analysis of what criteria (dimensions of quality) align with one's own desired learning outcomes. There is technically no limit to the number of criteria that can be included in a rubric, other than presumptions about the learners' ability to digest and thus make use of the information that is provided. In the example in Table 1, only three criteria were used, as judged appropriate for the desired outcomes of the high school poster competition. After this list of criteria is honed and pruned, the dimensions of quality and proficiency will need to be separately described (as in Table 1), and not just listed. The extent and nature of this commentary depends upon the type of rubric—analytical or holistic. This task of expanding the criteria is an inherently difficult task, because of the requirement for a thorough familiarity with both the elements comprising the highest standard of performance for the chosen task, and the range of capabilities of learners at a particular developmental level. A good way to get started is to think about how the attributes of a truly superb performance could be characterized in each of the important dimensions— the level of work that is desired for students to aspire to. Common advice (Moskal, 2000 ) is to avoid use of words that connote value judgments in these commentaries, such as “creative” or “good” (as in “the use of scientific terminology language is ‘good’”). These are essentially so general as to be valueless in of their ability to guide a learner to emulate specific standards for a task, and although it is ittedly difficult, they need to be defined in a rubric. Again, perusal of existing examples is a good way to get started with writing the full descriptions of criteria. Fortunately, there are a number of data banks that can be searched for rubric templates of virtually all types (Chicago Public Schools, 2000; Arter and McTighe, 2001 ; Shrock, 2006 ; Advanced Learning Technologies, 2006; University of Wisconsin-Stout, 2006). The final step toward filling in the grid of the rubric is to benchmark the remaining levels of mastery or gradations of quality. There are a number of descriptors that are conventionally used to denote the levels of mastery in addition to the conventional excellent-to-poor scale (with or without accompanying symbols for letter grades), and several examples from among the more common of these are listed below:

Scale 1: Exemplary, Proficient, Acceptable, Unacceptable

Scale 2: Substantially Developed, Mostly Developed, Developed, Underdeveloped

Scale 3: Distinguished, Proficient, Apprentice, Novice

Scale 4: Exemplary, Accomplished, Developing, Beginning

In this case, unlike the number of criteria, there might be a natural limit to how many levels of mastery need this expanded commentary. Although it is common to have multiple levels of mastery, as in the examples above, some educators (Bresciani et al., 2004 ) feel strongly that it is not possible for individuals to make operational sense out of inclusion of more than three levels of mastery (in essence, a “there, somewhat there, not there yet” scale). As expected, the final steps in having a “usable” rubric are to ask both students and colleagues to provide on the first draft, particularly with respect to the clarity and gradations of the descriptions of criteria for each level of accomplishment, and to try out the rubric using past examples of student work. Huba and Freed (2000) offer the interesting recommendation that the descriptions for each level of performance provide a “real world” connection by stating the implications for accomplishment at that level. This description of the consequences could be included in a criterion called “professionalism.” For example, in a rubric for writing a lab report, at the highest level of mastery the rubric could state, “this report of your study would persuade your peers of the validity of your findings and would be publishable in a peer-reviewed journal.” Acknowledging this recommendation in the construction of a rubric might help to steer students toward the perception that the rubric represents the standards of a profession, and away from the perception that a rubric is just another way to give a particular teacher what he or she wants (Andrade and Du, 2005 ). As a further help aide for beginning instructors, a number of Web sites, both commercial and open access, have tools for online construction of rubrics from templates, for example, Rubistar (Advanced Learning Technologies, 2006) and TeAch-nology (TeAch-nology, undated). These tools allow the wouldbe “rubrician” to select from among the various types of rubrics, criteria, and rating scales (levels of mastery). Once these choices are made, editable descriptions fall into place in the proper cells in the rubric grid. The rubrics are stored in the site databases, but typically they can be ed using conventional word processing or spreadsheet software. Further editing can result in a rubric uniquely suitable for your teaching/learning goals. Go to: ANALYZING AND REPORTING INFORMATION GATHERED FROM A RUBRIC Whether used with students to set learning goals, as scoring devices for grading purposes, to give formative to students about their progress toward important course outcomes, or for assessment of curricular and course innovations, rubrics allow for both quantitative and qualitative analysis of student performance. Qualitative analysis could yield narrative s of where students in general fell in the cells of the rubric, and they can provide interpretations, conclusions, and recommendations related to student learning and development. For quantitative analysis the various levels of mastery can be assigned different numerical scores to yield quantitative rankings, as has been done for the sample rubric in Table 1. If desired, the criteria can be given different scoring weightings (again, as in the poster presentation rubric in Table 1) if they are not considered to have equal priority as outcomes for a particular purpose. The total scores given to each example of student work on the basis

of the rubric can be converted to a grading scale. Overall performance of the class could be analyzed for each of the criteria competencies. Multiple-choice exams have the advantage that they can be computer or machine scored, allowing for analysis and storage of more specific information about different content understandings (particularly misconceptions) for each item, and for large numbers of students. The standard rubric-referenced assessment is not designed to easily provide this type of analysis about specific details of content understanding; for the types of tasks for which rubrics are designed, content understanding is typically displayed by some form of narrative, free-choice expression. To try to capture both the benefits of the free-choice narrative and generate an in-depth analysis of students' content understanding, particularly for large numbers of students, a special type of rubric, called the double-digit, is typically used. A largescale example of use of this type of scoring rubric is given by the Trends in International Mathematics and Science Study (1999). In this study, double-digit rubrics were used to code and analyze student responses to short essay prompts. To better understand how and why these rubrics are constructed and used, refer to the example provided in Figure 2. This double-digit rubric was used to score and analyze student responses to an essay prompt about ecosystems that was accompanied by the standard “sun-tree-bird” diagram (a drawing of the sun, a tree, and other plants; various primary and secondary consumers; and some not well-identifiable decomposers, with interconnecting arrows that could be interpreted as energy flow or cycling of matter). A brief narrative, summarizing the “big ideas” that could be included in a complete response, along with a sample response that captures many of these big ideas accompanies the actual rubric. The rubric itself specifies major categories of student responses, from complete to various levels of incompleteness. Each level is assigned one of the first digits of the scoring code, which could actually correspond to a conventional point total awarded for a particular response. In the example in Figure 2, a complete response is awarded a maximum number of 4 points, and the levels of partially complete answers, successively lower points. Here, the “incomplete” and “no response” categories are assigned first digits of 7 and 9, respectively, rather than 0 for clarity in coding; they can be converted to zeroes for averaging and reporting of scores.

Figure 2.

A double-digit rubric used to score and analyze student responses to an essay prompt about ecosystems. The second digit is assigned to types of student responses in each category, including the common approaches and misconceptions. For example, code 31 under the first partial- response category denotes a student response that “talks about energy flow and matter cycling, but does not mention loss of energy from the system in the form of heat.” The sample double-digit rubric in Figure 2 shows the code numbers that were assigned after a “first ” through a relatively small number of sample responses. Additional codes were later assigned as more responses were reviewed and the full variety of student responses revealed. In both cases, the second digit of 9 was reserved for a general description that could be assigned to a response that might be unique to one or only a few students but nevertheless belonged in a particular category. When refined by several assessments of student work by a number of reviewers, this type of rubric can provide a means for a very specific quantitative and qualitative understanding, analysis, and reporting of the trends in student understanding of important concepts. A high number of 31 scores, for example, could provide a major clue about deficiencies in past instruction and thus goals for future efforts. However, this type of analysis remains expensive, in that scores must be assigned and entered into a data base, rather than the simple collection of student responses possible with a multiple-choice test. Go to: WHY USE RUBRICS? When used as teaching tools, rubrics not only make the instructor's standards and resulting grading explicit, but they can give students a clear sense of what the expectations are for a high level of performance on a given assignment, and how they can be met. This use of rubrics can be most important when the students are novices with respect to a particular task or type of expression (Bresciani et al., 2004 ). From the instructor's perspective, although the time expended in developing a rubric can be considerable, once rubrics are in place they can streamline the grading process. The more specific the rubric, the less the requirement for spontaneous written for each piece of student work—the type that is usually used to explain and justify the grade. Although provided with fewer written comments that are individualized for their work, students nevertheless receive informative . When information from rubrics is analyzed, a detailed record of students' progress toward meeting desired outcomes can be monitored and then provided to students so that they may also chart their own progress and improvement. With team-taught courses or multiple sections of the same course, rubrics can be used to make faculty standards explicit to one another, and to calibrate subsequent expectations. Good rubrics can be critically important when student work in a large class is being graded by teaching assistants. Finally, by their very nature, rubrics encourage reflective practice on the part of both students and teachers. In particular, the act of developing a rubric, whether or not it is subsequently used, instigates a powerful consideration of one's values and expectations for student learning, and the extent to which

these expectations are reflected in actual classroom practices. If rubrics are used in the context of students' peer review of their own work or that of others, or if students are involved in the process of developing the rubric, these processes can spur the development of their ability to become self-directed and help them develop insight into how they and others learn (Luft, 1999 ). Go to: ACKNOWLEDGMENTS We gratefully acknowledge the contribution of Richard Donham (Mathematics and Science Education Resource Center, University of Delaware) for development of the double-digit rubric in Figure 2. Go to: REFERENCES

Advanced Learning Technologies, University of Kansas Rubistar. 2006. [28 May 2006].http://rubistar.4teachers.org/index.php.

Andrade H., Du Y. Student perspectives on rubric-referenced assessment. Pract. Assess. Res. Eval. 2005 :10. [18 May 2006]; http://pareonline.net/pdf/v10n3.pdf.

Arter J. A., McTighe J. Scoring Rubrics in the Classroom: Using Performance Criteria for Assessing and Improving Student Performance. Thousand Oaks, CA: Corwin Press; 2001.

Bresciani M. J., Zelna C. L., Anderson J. A. Assessing Student Learning and Development: A Handbook for Practitioners. Washington, DC: National Association of Student Personnel s; 2004. Criteria and rubrics; pp. 29–37.

Chicago Public Schools The Rubric Bank. 2000. [18 May 2006].http://intranet.s.k12.il.us/Assessments/Ideas_and_Rubrics/Rubric_Bank/rubric_bank.h tml.

Ebert-May D., Brewer C., Allred S. Innovation in large lectures—teaching for active learning. Bioscience. 1997;47:601–607.

Ebert-May D. Scoring Rubrics. Field-tested Learning Assessment Guide. [18 May 2006]. undated.http://www.wcer.wisc.edu/archive/cl1/flag/cat/catframe.htm.

Huba M. E., Freed J. E. Learner-Centered Assessment on College Campuses. Boston: Allyn and Bacon; 2000. Using rubrics to provide to students; pp. 151–200.

Luft J. A. Rubrics: design and use in science teacher education. J. Sci. Teach. Educ. 1999;10:107– 121.

Lynd-Balta E. Using literature and innovative assessments to ignite interest and cultivate critical thinking skills in an undergraduate neuroscience course. CBE Life Sci. Educ. 2006;5:167– 174.[PMC free article] [PubMed]

MacKenzie W. NETS●S Curriculum Series: Social Studies Units for Grades 9–12. Washington, DC: International Society for Technology in Education; 2004. Constructing a rubric; pp. 24–30.

Mettler C. A. Deg scoring rubrics for your classroom. In: Boston C., editor. Understanding Scoring Rubrics: A Guide for Teachers. University of Maryland, College Park, MD: ERIC Clearinghouse on Assessment and Evaluation; 2002. pp. 72–81.

Moni R., Beswick W., Moni K. B. Using student to construct an assessment rubric for a concept map in physiology. Adv. Physiol. Educ. 2005;29:197–203. [PubMed]

Moskal B. M. Scoring Rubrics Part II: How? ERIC/AE Digest, ERIC Clearinghouse on Assessment and Evaluation. Eric Identifier #ED446111. 2000. [21 April 2006]. http://www.eric.ed.gov.

Porter J. R. Information literacy in biology education: an example from an advanced cell biology course. Cell Biol. Educ. 2005;4:335–343. [PMC free article] [PubMed]

Shrock K. Kathy Shrock's Guide for Educators. 2006. [5 June 2006].http://school.discovery.com/schrockguide/assess.html#rubrics.

TeAch-nology, Inc TeAch-nology. [7 June 2006]. undated. http://teachnology.com/web_tools/rubrics.

Trends in International Mathematics and Science Study Science Benchmarking Report, 8th Grade, Appendix A: TIMSS Design and Procedures. 1999. [9 June 2006].http://timss.bc.edu/timss1999b/sciencebench_report/t99bscience_A.html.

University of Wisconsin–Stout Teacher Created Rubrics for Assessment. 2006. [7 June 2006].http://www.uwstout.edu/soe/profdev/rubrics.shtml.

Wiggins G. A true test: toward more authentic and equitable assessment. Phi Delta Kappan. 1989;49:703–713.

Wright R., Boggs J. Learning cell biology as a team: a project-based approach to upper-division cell biology. Cell Biol. Educ. 2002;1:145–153. [PMC free article] [PubMed]

They are definitely a good thing when implemented and used properly. Of course, if you have a terrible rubric then this is not useful. It`s usually best when someone directly involved in working with the students who knows what they can achieve and what they are learning has a hand in making the rubric.

Continuous Improvement Rubrics

What are rubrics? Rubrics, also commonly referred to as rating scales, are increasingly used to evaluate performance assessments. Much is written about rubrics these days, and a great deal of information is available on the Web. There is a growing number of ready-made rubrics for teachers to . Some sample rubrics for second language assessment are included in the Examples section, and we will increase this collection by gathering rubrics from teachers. Some of the many rubrics-related resources are listed in the Resources section. The origins of the word “rubric” date to the 14th Century: Middle English rubrike red ocher, heading in red letters of part of a book, from Anglo-French, from Latin rubrica, from rubr-, ruber red. Today a rubric is a guide listing specific criteria for grading or scoring academic papers, projects, or tests (Merriam Webster Dictionary). Brookhart, in her book How to Create and Use Rubrics (2013), defines a rubric as: a coherent set of criteria for students’ work that includes descriptions of levels of performance quality on the criteria. When using a rubric to assess student work, it is important to that you are trying to find the best description of the student work that you are assessing. Effective rubrics identify the most important qualities of the work, AND have clear descriptions of performance for each quality. Why use rubrics? When we consider how well a learner performed a speaking or writing task, we do not think of the performance as being right or wrong. Instead, we place the performance along a continuum from exceptional to not up to expectations. Rubrics help us to set anchor points along a quality continuum so that we can set reasonable and appropriate expectations for learners and consistently judge how well they have met them. Below are several reasons why rubrics are helpful in assessing student performance:

Evaluating student work by established criteria reduces bias.

Identifying the most salient criteria for evaluating a performance and writing descriptions of excellent performance can help teachers clarify goals and improve their teaching.

Rubrics help learners set goals and assume responsibility for their learning—they know what comprises an optimal performance and can strive to achieve it.

Rubrics used for self- and peer-assessment help learners develop their ability to judge quality in their own and others' work.

Learners receive specific about their areas of strength and weakness and about how to improve their performance.

Learners can use rubrics to assess their own effort and performance, and make adjustments to work before submitting it.

Rubrics allow learners, teachers, and other stakeholders to monitor progress over a period of instruction.

Time spent evaluating performance and providing can be reduced.

When students participate in deg rubrics, they are empowered to become self-directed learners.

Rubrics help teachers assess work based on consistent, agreed upon, and objective criteria.

Well-designed rubrics increase an assessment's construct and content validity by aligning evaluation criteria to standards, curriculum, instruction, and assessment tasks.

Well-designed rubrics increase an assessment's reliability by setting criteria that raters can apply consistently and objectively.

How do students become self-directed learners? How Learning Works: Seven Research-Based Principles for Smart Teaching

#7. To become self-sufficient learners, students must learn to monitor and adjust their approaches to learning. Principle: To become self-directed learners, students must learn to assess the demands of the task, evaluate their own knowledge and skills, plan their approach, monitor their progress, and adjust strategies as needed. p. 191 Compared to high school, students in college are often required to complete larger, longer-term projects and must do so rather independently. . . . One of the major intellectual challenges students face upon entering college is managing their own learning. Unfortunately, these metacognitive skills tend to fall outside the context area of most courses, and consequently they are often neglected in instruction. p. 191 [Research related to metacognition tell us] that learners need to engage in a variety of processes to monitor and control their learning. [Students need to be taught to] 1. Assess the task at hand, taking into consideration the task’s goals and constraints 2. Evaluate their own knowledge and skills, identifying strengths and weaknesses 3. Plan their approach in a way that s for the current situation 4. Apply various strategies to enact their plan, monitoring their progress along the way 5. Reflect on the degree to which their current approach is working so that they can adjust and restart they cycle as needed pp. 192-193 Assessing the Task Research suggests that the first phase of metacognition—assessing the task—is not always a natural or easy one for students. . . . Given that students can easily misassess the task at hand, it may not be sufficient simply to remind students to “read the assignment carefully.” In fact, students may need to (1)

learn how to assess the task, (2) practice incorporating this step into their planning before it will become habit, and (3) receive on the accuracy of their task assessment before they begin working on a given task. pp. 194-195 [What can be done to address this area?]

Be more explicit than you may think necessary. . . . It may be necessary not only to express . . . goals explicitly but also to articulate what students need to do to meet the assignment’s objectives. . . . [Also], explain to students why these particular goals are important. p. 204

Tell students what you do not want. . . . It can be helpful to identify what you do not mean by referring to common misinterpretations students have shown in the past or by explaining why some pieces of work do not meet you assignment goals. p. 204

Check students’ understanding of the task . . . . Ask them what they think they need to do to complete an assignment or how they plan to prepare for an exam. Then give them . . . . For complex assignments, ask student to rewrite the main goal of the assignment in their own words and then describe the steps that they feel they need to take in order to complete that goal. p. 205

Provide performance criteria with the assignment. . . . This can be done as a checklist that highlights the assignments’ key requirements. . . . Encourage students to refer to the checklist as they work on the assignment, and require them to submit a signed copy of it with the final product. . . . Your criteria could also be communicated to students through a performance rubric that explicitly represents the component parts of the task. . . . In addition to helping students “size up” a particular assignment, rubrics can help students develop other metacognitive habits. p. 205

Identifying Personal Strengths and Weaknesses Research has found the that people in general have a great difficulty recognizing their own strengths and weaknesses, and students appear to be especially poor judges of their own knowledge and skills. . . . [Additionally,] students with weaker knowledge and skills are less able to assess their abilities than students with stronger skills. p. 195 [To assist students with identifying their strengths and weaknesses . ..]

Give early, performance-based assessments. Provide students with ample practice and timely to help them develop a more accurate assessment of their strengths and weaknesses. Do this early in the enough in the semester so that they have time to learn from your and adjust as necessary. p. 206

Provide opportunities for self-assessment. . . . Give students practice exams (or other assignments) that replicate the kinds of questions they will see on real exams and them proved answer keys so that students can check their own work. p. 206

Planning Students tend to spend too little time planning, especially when compared to more expert individuals. This lack of planning [leads] novices to waste much of their time because they made false starts and took steps that ultimately did not lead to solutions. . . . Even though planning one’s approach to a task can increase the chances of success, students tend not to recognize the need for it. . . . When students do engage in planning, they often make plans that are not well matched to the task. p. 197 [To teach students how to plan appropriate approaches . . . ]

Have students implement a plan that you provide. . . . [For example], provide students with a set of interim deadlines or a time line for deliverables that reflect the way you would plan the stages of work. . . . Although requiring students to follow a plan that you provide does not give them practice developing their own plan, it does help them think about the component parts of a complex task, as well as their logical sequencing. p. 207

Have students develop their own plan. . . . When students’ planning skills have developed, . . . require them to submit a plan as the first “deliverable” in larger assignments. This could be in the form of a project proposal, an annotated bibliography, or a time line that identifies the key stages of work. Provide on their plan. p. 207

Make planning the central goal of the assignment. . . . Assign some tasks that are solely on planning. For example, instead of solving or completing a task, students could be asked to plan a solution strategy for a set of problems that involves describing how they would solve each problem. . . . Follow-up assignments can require students to implement their plans and reflect on their strengths and deficiencies. p. 208

Applying the Plan and Monitoring Progress Once students have a plan and begin to apply [it], they need to monitor their performance [by asking themselves], “Is this strategy working, or would another one be more productive?” . . . . Students who naturally monitor their own progress and try to explain to themselves what they are learning along the way generally show greater learning gains as compared to students who engage less often in selfmonitoring and self-explanation activities. . . . Students who [are] taught or prompted to monitor their own understanding or to explain to themselves what they [are] learning [make] greater learning gains relative to students what [are not taught to self-monitor]. [Moreover], research has shown that when students are taught to ask each other a series of comprehension-monitoring questions during reading, they learn to self-monitor more often and hence learn more from what they read. pp. 197-199 [How can you help students learn to self-monitor?]

Provide simple heuristics for self-correction. Teach students basic heuristics for quickly assessing their own work and identifying errors. For example, encourage students to ask themselves, “Is this a reasonable answer, given the problem?”. . . . There are often disciplinary heuristics that students should also learn to apply. p. 208

Have students do guided self-assessments. Require students to assess their own work against a set of criteria that you provide. . . . However, students may not be able to accurately assess their own work without first seeing this skill demonstrated or getting some explicit instruction and practice. p. 209

Require students to reflect on and annotate their own work. . . . Require as a component of the assignment that students explain what they did and why, describe how they responded to various challenges, and so on. . . . Requiring reflection or annotation helps students become more conscious of their own thought processes and work strategies and can lead them to make more appropriate adjustments. p. 209

Use peer review/reader response. Have students analyze their classmates’ work and provide . Reviewing one another’s work can help students evaluate and monitor their own work more effectively and then revise it accordingly. However, peer review is generally only effective when you give student reviewers specific criteria about what to look for and comment on. pp. 209-210

Reflecting and Modifying Approach Even when students monitor their performance . . . there is no guarantee that they will adjust or try more effective alternatives [because they may be resistant to changing their methods or] may lack alternative strategies. . . . Good problem solvers will try new strategies if their current strategy is not working, whereas poor problem solvers will continue to use a strategy even after it has failed. . . . [Using alternative strategies may not occur] if the perceived cost of switching to a new approach is too high. Such costs include the time and effort it takes to change one’s habits as well as the fact that new approaches, even if better in the long run, tend to under-perform more practiced approaches at first. . . . Research shows that people will often continue to use familiar strategies that work moderately well rather than switch to a new strategy that would work better. pp. 199-200 [If it is so difficult to for students to change, how can you get then to reflect on and modify their approaches?]

Provide activities that require student to reflect on their performances. . . . For example, [you could ask students to] answer questions such as: What did you learn from doing this project? What skills would do you need to work on? How would you prepare differently or approach the final assignment based on across the semester? How have your skills evolved across the last three assignments? p. 210

Prompt students to analyze the effectiveness of their study skills. When students learn to reflect on the effectiveness of their own approach, they are able to identify problems and make necessary adjustments. A specific example of a self-reflective activity is an “exam wrapper.” . . . An exam wrapper might ask student (1) what they of errors they made . . . , (2) how they studied . . . , and (3) what they will do differently in preparation for the next exam). pp. 210-211

Present multiple strategies. Show students multiple ways that a task or problem can be conceptualized, represented, or solved. . . . Students be asked to solve problems in multiple

ways and then discuss the advantages and disadvantages to the different methods. Exposing students to different approaches and analyzing their merits can highlight the value of critical exploration. p. 211

Create assignments that focus on strategizing rather than implementation. Have students propose a range of potential strategies and predict their advantages and disadvantages rather than actually choosing and carrying one through. . . . By putting the emphasis of the assignment on thinking the problem through, rather than solving it, students get practice evaluating strategies in relation to their appropriate or fruitful application. pp. 211-212

Researchers found a pattern that linked students’ beliefs about intelligence with their study strategies and learning beliefs. . . . Students who believe intelligence is fixed have no reason to put in time and effort to improve because they believe their efforts will have little or no effect. . . . Students who believe that intelligence is incremental (that is, skills can be developed that will lead to greater academic success) have a good reason to engage their time and effort in various study strategies because they believe this will improve their skills and hence their outcomes. . . . Beliefs about one’s own abilities can also have an impact on metacognitive processes and learning. pp. 200-201 Implications: Students tend not to apply metacognitive skills as well or as often as they should. This implies that students will often need our in learning, refining, and effectively applying basic metacognitive skills. To address these needs, them, requires us as instructors to consider the advantages these skills can offer students in the long run and then, as appropriate, to make the development of metacognitive skills part of our course goals. . . . The most natural implication may be to address these issues as directly as possible—by working to raise students’ awareness of the challenges they face and by considering some of the interventions that helped students productively modify their beliefs about intelligence—and at the same time, to set reasonable expectations for how much improvement is likely to occur. pp. 202-203 [To help students overcome their inaccurate understanding of intelligence and learning . . . ]

Address students’ beliefs about learning directly [by] discussing the nature of learning and intelligence . . . to disabuse them of unproductive beliefs (for example, “I can’t draw” or “I can’t do math) and to highlight the positive effects of practice, effort, and adaptation. p. 212

Broaden students’ understanding of learning [by helping them understand that] you can know something at one level (recognize it) and still not know it (know how to use it). Consider introducing students to those various forms of knowledge so that they can more accurately assess the task, . . . assess their own strengths and weaknesses, . . . and identify gaps in their education. pp. 212-213

Help students set realistic expectations [by recalling] your own frustrations as a student and [describing] how you (or a famous figure in your field) overcame various obstacles. . . . [This can] help students avoid unproductive and often inaccurate attributions about themselves. p. 213

[In addition, you can also help students better understand the metacognition by modeling your own metacognitive processes and helping students learn to scaffold their own metacognitive processes.] Scaffolding refers to the process by which instructors provide students with cognitive s early in their learning, and then gradually remove them as students develop greater mastery and sophistication. . . . Instructors can give students practice working on discrete phases of the metacognitive process in isolation before asking students to integrate time. . . . Breaking down the process highlights the importance of particular stages. . . . A second form of scaffolding involves a progression from tasks with considerable instructor provided structure to tasks that require greater or even complete student autonomy. p. 215

Scoring rubrics help the teacher to show the students what the goals are and let the students understand why they were given such grade or score. The students will be able to grasp an understanding of their performance in contrast with the prescribed criteria. It will be easier for the teacher to maintain a standard that students should adhere to.

What is Scoring Rubrics? -it means a standard level of performance/criteria of Students. It is use to score/grade written exams,presentation etc.. Rubics have set of scoring and values for criteria that are group in categories. For exams, rubric provides an objective against the student performance. It also use to delineate the criteria`s consistent for grading. It will provide students a basis for their self-reflection and peer review. Rubric can be use to in measuring a stated objective and rate performance. Rubrics are categorized to treat the criteria one at a time and to used with a similar specific task that are applicable to exams. Benefits of Scoring Rubrics The main benefit of rubric is to test the performance of students. Using rubrics, you can observe the result of student`s work. This will let the students to understand the goals that they have to achieve. It provides a way to communicate and developed our goals to the students.

How Do Rubrics Help? How students and teachers understand the standards against which work will be measured. Rubrics are multidimensional sets of scoring guidelines that can be used to provide consistency in evaluating student work. They spell out scoring criteria so that multiple teachers, using the same rubric for a student's essay, for example, would arrive at the same score or grade.

Rubrics are used from the initiation to the completion of a student project. They provide a measurement system for specific tasks and are tailored to each project, so as the projects become more complex, so do the rubrics. Rubrics are great for students: they let students know what is expected of them, and demystify grades by clearly stating, in age-appropriate vocabulary, the expectations for a project. They also help students see that learning is about gaining specific skills (both in academic subjects and in problem-solving and life skills), and they give students the opportunity to do self-assessment to reflect on the learning process.

Teacher Eeva Reeder says using scoring rubrics "demystifies grades and helps students see that the whole object of schoolwork is attainment and refinement of problem-solving and life skills." Rubrics also help teachers authentically monitor a student's learning process and develop and revise a lesson plan. They provide a way for a student and a teacher to measure the quality of a body of work. When a student's assessment of his or her work and a teacher's assessment don't agree, they can schedule a conference to let the student explain his or her understanding of the content and justify the method of presentation. There are two common types of rubrics: team and project rubrics. Team Rubrics A team rubric is a guideline that lets each team member know what is expected of him or her. For example, a team rubric contains detailed descriptions for tasks that will be done while the students are working as a team, and states acceptable degrees of behavior. It also defines the consequences for a team member who is not participating, and lists actions or tasks required of each team member for the completion of a successful project, such as the following:

Did the person participate in the planning process?

How involved was each member?

Was the team member's work to the best of his or her ability?

Shows the quantitative value of the behaviors or actions.

"For as long as assessment is viewed as something we do 'after' teaching and learning are over, we will fail to greatly improve student performance, regardless of how well or how poorly students are currently taught or motivated."-GRANT WIGGINS, EDD., PRESIDENT AND DIRECTOR OF PROGRAMS, RELEARNING BY DESIGN, EWING, NEW JERSEY Project Rubrics A project rubric lists the requirements for the completion of a project-based-learning lesson. It is usually some sort of presentation: a word-processed document, a poster, a model, a multimedia presentation, or a combination of presentations. The teacher can create a project rubric, or students can collaborate, helping set goals for the project and suggest how their work should be evaluated. Together, the teacher and the students can answer the following questions:

What is the quality of the work?

How do you know the content is accurate?

How well was the presentation delivered?

How well was the presentation designed?

What was the main idea?

Sample Rubrics Look at these rubrics from several websites, which show team rubrics and project rubrics for various subjects and grade levels.

Collaboration Rubric for Group Work from a high school science project, San Diego City Schools